Version

|

Wwise SDK 2023.1.3

|

When looking at a Capture session, it is not uncommon to have a lot capture data visible at a given time, making it difficult to pinpoint the specific information you want to investigate. The filtering toolbar gives you the ability to narrow your search and focus on the sound, problem, or project area that you're interested in. Filter criteria can be applied globally across all profiling views or locally within a specific view and can also be reset or synchronized at any time. Additionally, the Mute/Solo filtering allows to "see only what you hear" when profiling in order to leverage your ears, while filter expressions can further narrow the results. The combined strength of these filtering methods brings unprecedented organization and navigation of your capture data.

A filtering toolbar can be found of the following views:

The filtering toolbar enables you to reduce the amount of information displayed in the view and focus on the elements that are relevant to you.

Filter expressions provide the ability to include, exclude, and use wildcards in conjunction with text, game objects, bus, and events. This enables you to create complex filtering schemes and further reduce the scope of what's visible, as you refine and navigate what is seen across profiling views. Navigating the capture data with filter expressions, either globally across all of the profiling views or locally within a specific view, affords the flexibility to navigate the information in a way that brings greater focus to the project.

The text in the filter searches for the following object names, by default:

For more information on Filter Expressions, see Using Profiler Filter Expressions.

The Game Sync Monitor has been updated to show Game Sync values for playing voices over-time, on a per-game object basis. The need to explicitly add RTPCs to the Game Sync Watches list has been removed, and now displays all RTPC values set on currently playing game objects. A "view everything and filter the results" philosophy is at the core of Profiler Filtering and allows for swift navigation of capture data for a quicker and clearer understanding of your project.

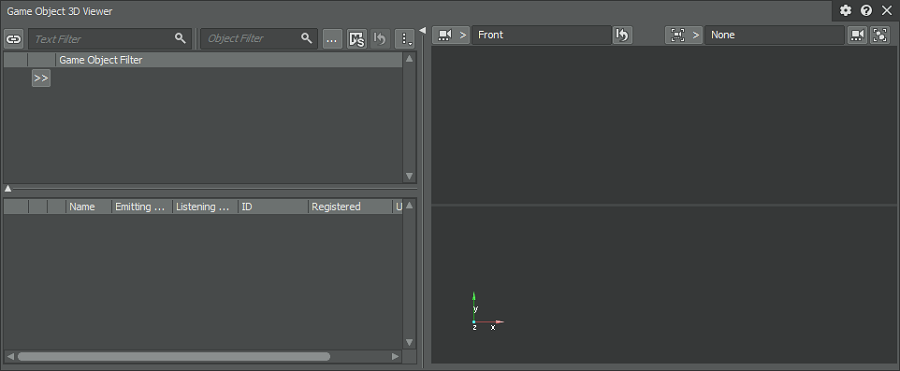

The Game Object 3D Viewer has been redesigned to leverage the full spectrum of capture data while providing the same profile filtering available in the rest of the profiling views. Additionally, the functionality to filter Game Objects by name and pin these Game Object filters so they persist across profiling sessions, allows for a workflow that can easily be repeated.

Voice Monitor

The Voice Monitor now includes a redesigned filter bar.

Voice Explorer

The Voice Explorer displays the playing voices for the current cursor time set for the profiling capture session. The voices are organized by playing instance, where each playing instance corresponds to an Event being posted. For example, calling PostEvent twice on the same Event generates two playing instances in the Voice Explorer. The Voice Explorer offers display options to create rows for the Game Object, Event name, and Event target.

Voices Graph

The Voices Graph now includes a redesigned filter bar.

The Audiokinetic Spatial Audio team has been hard-at-work enhancing the authoring capabilities for realistic simulation of dynamic reflections the and complex negotiation of diffraction.

Stochastic ray-tracing now underpins the propagation pathing for reflections and diffraction, simultaneously increasing scalability while optimizing performance. From a runtime perspective, high order reflection or diffraction can now be used with less of a performance impact, thanks to this new path-finding algorithm. 4th order reflections are now achievable!

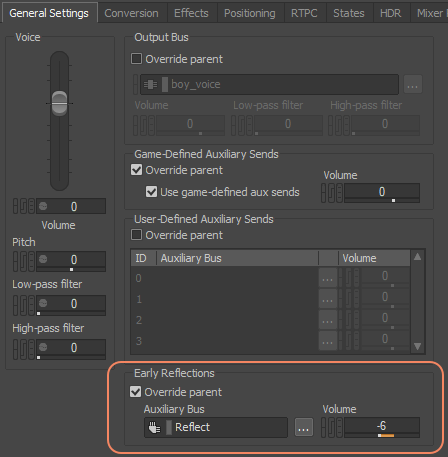

Spatial Audio properties can now be defined on a per-sound basis, allowing the author to choose which sounds are sent to the specified Reflect Aux Bus and whether diffraction is enabled. Previously only configurable from the game editor and limited to a per-game object basis, this update unlocks the ability to apply and fine tune spatial audio independently of the other components of a sound hierarchy.

"Early Reflections" added to General Settings tab

"Enable Diffraction" has been added under "3D Position" in the Positioning tab

Sound can go through walls. Rooms or geometry (per-triangle) now expose an occlusion factor which filters the direct path from the emitter to the listener. Additionally, the wet path now diffracts around geometry edges.

Wwise now supports the playback of 4th and 5th order Ambisonics audio sources and channel configuration on busses for runtime encoding.

Unreal: No longer limited to geometry primitives, the new AkGeometry component applies surface reflectors on either static or collision meshes and automatically maps physical materials to acoustic textures.

Unity: Meshes can now have an Acoustic Texture for each rendering material. Previously, the Unity component for spatial audio geometry (AkSurfaceReflector) used the game object's mesh as the spatial audio geometry. Now, any mesh can be assigned to the AkSurfaceReflector component. Furthermore, you can assign a different Acoustic Texture to any of the mesh's materials.

Rooms now include the ability to post an AK Event which can be used to play fixed-orientation ambient sounds (room tones) that are spatialized in 360 degrees around the listener when inside the room. And they transition smoothly to point source emitters positioned at the portals, when outside.

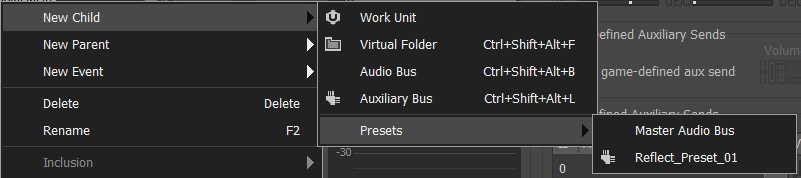

The workflow for saving and recalling presets for different container types that can have a direct impact on your authoring workflow, has been enhanced, especially in the context of Spatial Audio. The enhanced workflow allows for the saving of complex or frequently used Aux Bus configurations as presets that can be used as a starting point when creating your systems.

Please note that the migration to Wwise 2019.2 involves some manual operations, both in the game's code and the Wwise project, for projects using Spatial Audio with a previous version of Wwise. You can refer to the Wwise Spatial Audio Migration Guide for more details.

There are extensive changes to memory management, as Memory Pools have been replaced by Memory Categories which represent memory allocations.

For more information on Memory Management, see Managing Memory.

The Master-Mixer Hierarchy now supports the creation of Work Units (.wwu) throughout, enhancing the productivity of your audio teams, especially for games releasing many DLC packs.

Enhancements have been brought to WAAPI, making it more powerful and easier to use.

A new system called Wwise Console has replaced the legacy Wwise CLI, which still works, but is now deprecated. A number of Wwise operations are available from its command-line interface, including SoundBank generation. This can prove useful when integrating Wwise as part of an automated process, such as daily game builds with audio assets.

Device enumeration was previously only available from the Audio Preferences menu in Wwise authoring. Now, it's also possible to enumerate available devices for audio device plug-ins from the SDK (using AK::SoundEngine::GetDeviceList). This means it's now possible to implement an in-game Audio Preferences menu using the SDK. It's now also possible to know at runtime whether an audio device plug-in only supports the default device or if multiple devices are available. This API is available for built-in audio devices on all platforms that support multiple devices (PC, Mac, Linux, XboxOne, and PS4). External audio device plug-ins, such as the ASIO audio device plug-in for example, can now also implement device enumeration.

Media IDs can now be stored in a separate project file for alternate management strategies. Media IDs are no longer saved in Work Unit files, reducing the number of version control conflicts. Having the Media IDs in a single file allows more control / visibility of Media IDs throughout the life of the project. For more information on Media Asset IDs, see Managing Media Asset IDs.

Questions? Problems? Need more info? Contact us, and we can help!

Visit our Support pageRegister your project and we'll help you get started with no strings attached!

Get started with Wwise